By: Kassandra Lippincott

How TikTok Is Rewiring What We Believe

The Feed That Thinks For You

Over the past few years, social media has shifted from a space where people actively search for information to one in which information is delivered to them constantly, and often without question. Platforms like TikTok are no longer just entertainment apps. They have become central spaces where users encounter news, political discourse, and cultural narratives.

What makes this evolution significant is not simply the presence of misinformation, but how it is experienced. Content is rarely slowed down, questioned, or verified. Instead, it is consumed quickly, emotionally, and repeatedly. I’ve seen this play out in real life with family members sending videos that feel urgent and real, only to realize they were AI-generated or misleading after a second look. The issue is not just that false information exists, but that it feels believable in the moment it’s encountered.

TikTok’s structure plays a major role in this. Unlike earlier platforms, users don’t often search for content; it finds them. The “For You Page” is built on behavioral data, such as watch time, likes, and interactions, and constantly refines what appears next. As Schellewald (2021) explains, TikTok’s appeal lies in the experience of scrolling itself, a continuous, personalized stream designed to hold attention.

Over time, this creates a feedback loop. The more users engage with certain ideas or tones, the more those same ideas are reinforced. Zimmer et al. (2023) describe this as selective exposure, in which users gravitate toward content that aligns with their existing beliefs, thereby strengthening confirmation bias. What feels like a personal choice is often guided by an invisible system that organizes what is seen and repeated.

This dynamic can also be understood through the broader framework of algorithmic culture, in which decision-making processes that were once human are increasingly automated and embedded within platform infrastructures (Striphas, 2015). Rather than users consciously selecting what they want to see, the algorithm continuously anticipates and predicts their preferences based on past behavior. This creates an environment where visibility itself is structured by data-driven logic. Over time, this shifts authority away from traditional institutions and toward platform systems that operate without full transparency. As a result, users are not only consuming content, but are participating in a system that subtly guides perception, shaping what appears relevant, urgent, or true.

And repetition creates familiarity, which in turn creates trust.

When News Becomes Performance

If TikTok determines how content reaches users, the next question is what kind of content actually thrives. Increasingly, it’s content that blurs the line between fact, opinion, and performance.

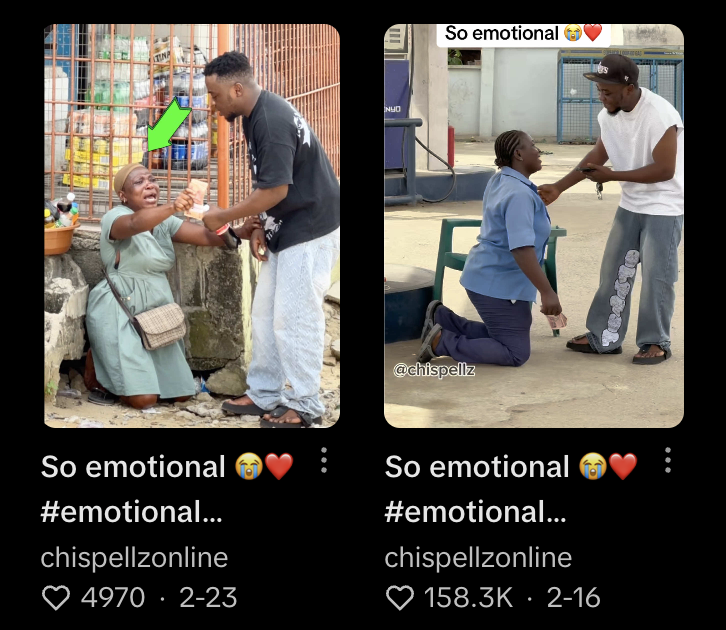

Misinformation rarely appears as an obvious falsehood. Instead, it appears in familiar formats such as storytimes, casual commentary, or short “explainer” videos. It doesn’t ask to be verified; instead, it insists on a specific emotional response.

This example reflects a broader conversion towards humor, irony, and familiarity that lower our guard. When information is packaged in a recognizable, entertaining format, it bypasses skepticism. The viewer is not evaluating accuracy, but reacting to something delivered in a familiar format.

At the same time, the rise of AI-generated content intensifies this effect. As Bontridder and Poullet (2021) explain, AI allows for the creation of highly realistic yet misleading media at scale. These videos don’t look fake; they look polished, intentional, and real enough. On TikTok, where content moves quickly, that’s often all it takes.

This assists in how information functions within digital environments. Rather than being evaluated based on credibility or source, content is increasingly assessed through its ability to capture attention and generate engagement. This aligns with what scholars describe as the attention economy, in which visibility is determined not by accuracy but by performance (Terranova, 2012; Davenport & Beck, 2001).

Within this system, humor, irony, and familiarity act as strategic tools that increase shareability, allowing content to travel further regardless of its factual grounding. As a result, the distinction between entertainment and information becomes increasingly unstable, making it more difficult for users to recognize when they are engaging with something meant to inform rather than to provoke.

What makes this especially powerful is the shift in credibility. TikTok doesn’t rely on traditional authority. Instead, it prioritizes relatability. As Grantham et al. (2025) notes, creators collapse the boundary between the personal and the political, presenting opinions in ways that feel intimate and authentic.

A person speaking casually into their phone can feel more trustworthy than a formal news source and not because they are more accurate, but because they feel more real.

Importantly, this evolution does not occur in isolation. AI-generated content builds upon an already existing environment in which visual media is trusted as evidence. Historically, visual representation has been associated with authenticity and proof, reinforcing the assumption that seeing equates to believing (Mitchell, 1994). However, as artificial intelligence advances, this assumption becomes increasingly unreliable. The ability to generate realistic but fabricated media challenges the foundations of how truth is identified and verified. In this context, the problem is not simply that false content exists, but that the tools used to distinguish truth from falsehood are becoming less effective.

Misinformation spreads not because it is convincing in a logical sense, but because it is effective in an emotional one.

It changes not just what we see, but how we process it in the first place.

You Didn’t Think It Was True. You Felt It Was.

What makes TikTok particularly powerful is not just what it shows, but how it makes users feel before they have time to think.

Information on the platform is processed less through careful evaluation and more through emotional response. Fear, outrage, urgency, and relatability act as entry points into understanding. These reactions happen quickly, before conscious analysis begins.

Research on TikTok and political subjectivity supports this. Errázuriz et al. (2026) describe this as “affective pedagogy,” where users learn through emotional and embodied responses rather than purely rational thinking. Over time, repeated exposure to emotionally charged content leads users to associate certain feelings with certain ideas.

This perspective can be further understood through affect theory, which emphasizes that emotional responses often precede conscious thought. Rather than individuals forming beliefs through deliberate reasoning, affect operates at a pre-conscious level, shaping how information is received before it is interpreted (Shouse, 2005; Hardt, 1999). In digital environments like TikTok, where exposure is rapid and continuous, this process becomes amplified.

As a result, users are repeatedly exposed to emotionally charged content, allowing affective responses to build up over time. This accumulation does not immediately produce belief, but it shapes the framework through which future information is interpreted. Making certain narratives feel more intuitive or “true” based on prior emotional associations.

What feels true becomes just as powerful as what is true.

This dynamic extends beyond belief formation. It affects how people move through daily life. The constant stream of emotionally intense content creates a kind of cognitive overload in which users are always reacting but rarely reflecting.

Scrolling becomes automatic, leading to time collapsing and attention fragmenting. Slowly, the boundary between being informed and being overwhelmed begins to blur.

What This Is Doing To Us

The long-term impact of this environment is not just misinformation; it is a transformation.

Users are not only consuming content but also being shaped by it. Attention shortens, relying more on reaction and leaving little room for reflection.

The issue is no longer just whether something is true or false, but how constant exposure to emotionally driven content reshapes perception, focus, and even presence in everyday life.

Doomscrolling is no longer just a habit to be broken, but something that begins to resemble a condition. Users are continuously engaged and reacting, yet increasingly disconnected from the moment in front of them.

From a broader perspective, this raises concerns about the long-term implications of sustained engagement within affect-driven media environments. Attention is a limited cognitive resource, and constant exposure to high-intensity content can reduce the capacity for sustained focus and reflection (Simon, 1971; Davenport & Beck, 2001).

If attention is consistently directed toward emotionally stimulating content, users may become conditioned to seek intensity over depth. This can contribute to shortened attention spans, reduced tolerance for complexity, and a diminished capacity for critical reflection. In this sense, the impact of platforms like TikTok extends beyond misinformation itself, influencing not only what users believe but how they process information and engage with the world more generally.

In this sense, platforms are not only shaping attention, but governing how users engage with information, reinforcing systems of control that operate through behavior rather than direct instruction.

If platforms like TikTok are shaping how we encounter information, and if that information is increasingly processed through emotion rather than analysis, then belief itself begins to change.

Truth becomes less about verification and more about resonance.

And the question shifts from “what do we believe?” to “how have we been taught to believe it and who benefits from that shift?”

References

Bontridder, N., & Poullet, Y. (2021). Artificial intelligence and disinformation: Ethical and legal considerations. Journal of Information, Communication and Ethics in Society.

Davenport, T. H., & Beck, J. C. (2001). The attention economy: Understanding the new currency of business. Harvard Business School Press.

Errázuriz, T., et al. (2026). Political subjectification and affective pedagogy on TikTok. (Course reading)

Grantham, S., et al. (2025). Authenticity, relatability, and political discourse on TikTok. (Course reading)

Hardt, M. (1999). Affective labor. Boundary 2, 26(2), 89–100.

Mitchell, W. J. T. (1994). Picture theory: Essays on verbal and visual representation. University of Chicago Press.

Schellewald, A. (2021). Communicative forms on TikTok. International Journal of Communication.

Shouse, E. (2005). Feeling, emotion, affect. M/C Journal, 8(6).

Simon, H. A. (1971). Designing organizations for an information-rich world. In Computers, communications, and the public interest.

Striphas, T. (2015). Algorithmic culture. European Journal of Cultural Studies, 18(4–5), 395–412.

Terranova, T. (2012). Attention, economy and the brain. Culture Machine, 13.

Zimmer, F., et al. (2023). Filter bubbles and selective exposure in digital environments.

Leave a comment